Why Carbon-Aware Systems Can Cost More and Still Be the Right Choice

Carbon-aware infrastructure sometimes costs more than the cheapest alternative. This article explains why that premium is not wasted money — it's a hedge against regulatory risk, a reduction in technical carbon debt, and often, a better engineering decision.

- Dr. Vivek Shilimkar

- 13 min read

In the previous article, I made the case that cost and carbon are not the same thing — that cheap cloud infrastructure can emit significantly more carbon than slightly more expensive alternatives. The response I got from several readers and colleagues was some version of the same question:

“Okay, I understand they’re different. But my budget is real. My sustainability goals are aspirational. When the two conflict, cost wins. Every time.”

I get it. I completely understand the argument that the quarterly cloud bill matters more than an annual sustainability report. I am sure, well-intentioned carbon reduction initiatives will get shelved the moment someone pointed out they’d increase infrastructure spend by 12%.

But I think this framing — carbon vs. cost, idealism vs. pragmatism — is incomplete. It assumes that the only value of carbon-aware infrastructure is environmental, and that any cost premium is essentially a charitable donation to the planet. That’s not the case always.

Carbon-aware systems can cost more and still be the right choice — not just ethically, but strategically, technically, and increasingly, financially.

The Carbon Premium Is Real — Let’s Not Pretend Otherwise

Before making the case for paying more, I want to be honest about what “more” looks like. Carbon-aware decisions do sometimes come with a cost premium.

Running workloads in a Nordic region with a clean energy grid might cost 10-20% more than a coal-heavy region in another part of the world. Choosing newer, energy-efficient instance types over older discounted ones can add 15-25% to your compute bill. Time-shifting batch jobs to align with low-carbon windows might require architectural changes that take engineering time to implement.

These costs are real. I’m not going to argue that they don’t exist or that they’re always offset by hidden savings. Sometimes you will pay more for lower-carbon infrastructure. The question isn’t whether the premium exists — it’s whether that premium buys you something beyond a feel-good metric.

It does.

What the Carbon Premium Actually Buys You

1. A Hedge Against Carbon Regulation

Carbon pricing is no longer a distant policy proposal. It’s active legislation in many parts of the world, and it’s expanding.

The EU Carbon Border Adjustment Mechanism (CBAM) began its transitional phase in 2023 and enters full enforcement in 2026. It requires importers to pay for the carbon embedded in goods entering the EU. While CBAM currently targets physical goods like steel, cement, and electricity, the EU has signaled intent to expand its scope. Digital services and cloud infrastructure are on the horizon.

The EU Corporate Sustainability Reporting Directive (CSRD), effective from 2024, requires large companies — including many technology firms — to disclose detailed environmental data, including Scope 3 emissions. Your cloud infrastructure emissions fall squarely into Scope 3. This isn’t optional reporting; it’s audited disclosure with financial penalties for non-compliance.

In the United States, the SEC’s climate disclosure rules (adopted in 2024, with phased implementation) require publicly traded companies to report material climate-related risks, including greenhouse gas emissions. California’s Climate Corporate Data Accountability Act goes further, requiring companies with revenue over $1 billion to disclose Scope 1, 2, and 3 emissions.

Several countries — Canada, the UK, Japan, South Korea — are implementing or expanding carbon pricing mechanisms that will eventually touch digital infrastructure.

Here’s what this means practically: if your organization is currently running workloads on high-carbon infrastructure because it’s cheaper, you’re accumulating regulatory exposure. When carbon pricing reaches cloud infrastructure — and the trajectory strongly suggests it will — the “cheap” regions become expensive overnight. Not because the cloud provider raised prices, but because your organization now owes a carbon tax on top of the compute cost.

Organizations that invest in carbon-aware infrastructure today are not paying a premium. They’re buying insurance against a regulatory future that’s arriving faster than most IT budgets account for.

2. Reduced Carbon Debt

In software engineering, we understand technical debt well. You make a shortcut today — skip the tests, hardcode the config, copy-paste the function — and it works fine for now. But the cost compounds. Eventually, you spend more fixing the debt than you would have spent doing it right in the first place.

Carbon debt works the same way.

Every month you run workloads on high-carbon infrastructure because it’s cheaper, you’re accumulating emissions that your organization will eventually need to account for. Maybe through offsets, maybe through regulatory compliance, maybe through customer pressure, maybe through all three.

The longer you run carbon-intensive infrastructure, the larger your cumulative emissions become, and the more expensive it gets to address them later. Carbon offsets — the most common retroactive solution — are getting more expensive every year. In 2020, high-quality carbon offsets cost around $5-10 per ton of CO₂. By 2025, prices for credible offsets have risen to $30-80 per ton, and projections suggest $100+ per ton by 2030.

Consider a mid-sized engineering organization running 200 compute instances across three regions. If the cheapest region emits 400 gCO₂/kWh more than a cleaner alternative, and each instance consumes an average of 200W, that’s roughly:

- 200 instances × 0.2 kW × 8,760 hours/year × 0.4 kgCO₂/kWh = ~140 tons CO₂/year

At current offset prices ($50/ton), that’s $7,000/year. At projected 2030 prices ($100/ton), it’s $14,000/year. And that’s just the offset cost — it doesn’t include the compliance reporting overhead, the audit costs, or the reputational risk.

Now compare that to the actual cost premium of running in a cleaner region — maybe $15,000-20,000/year more in compute costs. The gap between “cheap and dirty” and “slightly more expensive and clean” narrows every year as carbon costs rise. For many organizations, the crossover point — where the carbon-aware option becomes cheaper on a total-cost basis — is already here or approaching fast.

Paying the carbon premium now is like paying down technical debt before it compounds. It’s more expensive than doing nothing today, but cheaper than dealing with the consequences tomorrow.

3. Better Hardware, Better Performance

Here’s something that gets lost in the cost-vs-carbon framing: carbon-aware choices often align with better engineering decisions.

Choosing newer, energy-efficient instance types doesn’t just reduce carbon — it gives you faster processors, better memory bandwidth, improved network performance, and lower latency. ARM-based instances like AWS Graviton3 or Azure Cobalt consume significantly less power per computation while often outperforming their x86 equivalents on many workloads.

In the previous article, I showed how an ML training job in Oregon (us-west-2) on newer GPUs completed faster, cost less overall, and emitted far less carbon than the same job on older GPUs in Virginia. The carbon-aware choice was also the performance-optimal choice.

This isn’t always the case, but it’s common enough to challenge the assumption that carbon-aware means slower or less capable. Modern, efficient hardware tends to be both greener and faster. The premium you pay per hour is often offset by shorter execution times.

When you frame the decision as “pay 20% more per hour for a worse outcome,” it sounds like a bad deal. When you frame it as “pay 20% more per hour but finish 40% faster with better results,” the economics shift entirely.

4. Customer and Talent Expectations

This is the factor that’s hardest to put a dollar figure on, but it’s becoming impossible to ignore.

Enterprise customers increasingly require sustainability data from their vendors. RFPs now routinely include questions about carbon footprint, environmental certifications, and sustainability practices. Government procurement — especially in the EU and parts of Asia — is beginning to include carbon criteria in vendor evaluation.

If your competitors can demonstrate lower carbon intensity for their cloud operations and you can’t, that’s a competitive disadvantage that doesn’t show up in your infrastructure budget but absolutely shows up in your revenue pipeline.

On the talent side, engineers — particularly younger engineers entering the workforce — care about this. Not all of them, and not always as a deciding factor, but enough to matter for retention and recruitment. A company that can credibly say “we run carbon-aware infrastructure and here’s how” has a meaningful advantage over one that says “we optimize for cost and hope sustainability takes care of itself.”

5. Resilience Through Diversity

Carbon-aware architecture often requires distributing workloads across multiple regions based on grid carbon intensity, time-shifting batch processing, and designing for flexibility in where and when computation happens.

These are the same architectural patterns that improve resilience against outages, reduce latency for global users, and protect against regional pricing changes. A system designed to shift workloads based on carbon signals can also shift workloads based on availability signals or cost signals.

The investment in carbon-aware flexibility is also an investment in operational resilience. You’re not paying a premium for carbon alone — you’re paying for an architecture that’s more adaptable to multiple kinds of disruption.

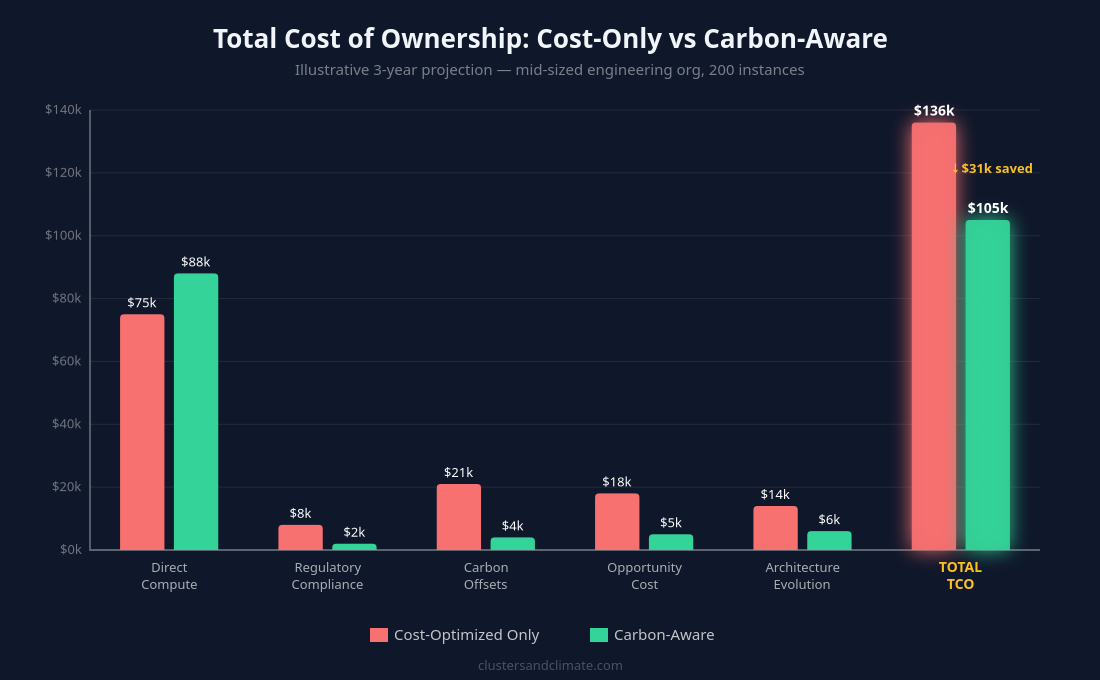

The Total Cost of Ownership Argument

Traditional cloud cost optimization looks at a narrow set of variables: compute cost per hour, storage cost per GB, network egress charges. The goal is to minimize the sum of these direct costs.

But total cost of ownership (TCO) for cloud infrastructure should include:

- Direct compute and storage costs (what we usually optimize for)

- Regulatory compliance costs (reporting, auditing, potential carbon taxes)

- Offset and remediation costs (if your organization commits to net-zero)

- Opportunity costs (lost contracts due to sustainability gaps)

- Architecture evolution costs (retrofitting carbon-awareness later vs. building it in now)

- Reputational risk (increasingly quantifiable through ESG ratings)

When you include all of these in the TCO calculation, the “cheap but dirty” option frequently loses to the “slightly more expensive but clean” option. The problem is that most infrastructure cost analyses don’t include these factors. They optimize for a partial picture and call it comprehensive.

I’m not suggesting every team needs to build a full TCO model before choosing a cloud region. But if your organization has sustainability commitments, carbon reduction targets, or operates in regulated markets, ignoring the broader cost picture is a financial risk, not a cost saving.

When Carbon-Aware Choices Save Money Too

I’ve been making the case for paying more, but it’s worth noting that carbon-aware decisions sometimes cost the same or less. Here are the most common scenarios:

Time-shifting batch workloads: Running heavy computation during off-peak hours when the grid is cleaner often coincides with lower electricity costs for the cloud provider, which can translate to lower spot instance prices. You’re not paying more — you’re paying differently.

Right-sizing with efficient instances: Moving from over-provisioned older instances to right-sized modern ones reduces both energy consumption and cost. This is the rare win-win that teams should pursue aggressively.

Shutting down idle resources: As I discussed earlier in this series, non-production environments running 24/7 waste both money and energy. Turning them off during idle periods saves cost and carbon simultaneously.

Consolidating workloads: Higher utilization per instance means fewer total instances, which means lower cost and lower energy consumption. Consolidation is almost always both cheaper and greener.

Serverless for sporadic workloads: Functions-as-a-service consume zero resources when idle. For bursty, low-frequency workloads, serverless is both the cheapest and the lowest-carbon option.

These aren’t hypothetical — they’re patterns I’ve seen reduce both cost and carbon by 30-50% when implemented together. Start with these before tackling the harder trade-offs where carbon-aware choices cost more.

A Framework for Making Carbon-Cost Decisions

Not every decision needs to be agonized over. Here’s a practical framework for evaluating carbon-aware options:

Tier 1: No trade-off required (do these immediately)

- Right-size instances to efficient modern types

- Shut down idle non-production resources

- Consolidate under-utilized workloads

- Use serverless for appropriate workloads

These reduce both cost and carbon. There’s no reason not to do them.

Tier 2: Small premium, large carbon benefit (do these next)

- Choose cleaner regions when the cost difference is less than 15%

- Use ARM-based instances where workloads are compatible

- Implement basic time-shifting for batch jobs

The cost premium is modest and the carbon reduction is significant. For most organizations, this is easily justifiable.

Tier 3: Meaningful premium, strategic value (evaluate case by case)

- Redesign architectures for multi-region carbon-aware scheduling

- Move production workloads to cleaner but more expensive regions

- Invest in carbon monitoring and optimization tooling

These require organizational buy-in and budget allocation. Evaluate them based on your organization’s sustainability commitments, regulatory exposure, and competitive landscape.

Tier 4: High premium, long-term positioning (plan for these)

- Carbon-neutral or carbon-negative infrastructure targets

- Real-time carbon-aware autoscaling across regions

- Investing in on-site renewable energy or direct power purchase agreements

These are strategic investments that make sense for organizations with strong sustainability mandates or those operating in markets where carbon regulation is imminent.

How to Build the Internal Case

If you’re an engineer or architect who sees the value but needs to convince leadership, here’s what I’ve seen work:

Lead with the numbers, not the narrative. “We can reduce our Scope 3 cloud emissions by 60% with a 12% increase in compute spend” is more persuasive than “we should be more sustainable.” Decision-makers respond to quantified trade-offs.

Connect to existing commitments. If your organization has published sustainability targets, net-zero pledges, or ESG commitments, carbon-aware infrastructure is a concrete path to delivering on those promises. It’s harder to dismiss a proposal that directly supports commitments the CEO already made in the annual report.

Start with Tier 1. Demonstrate that the first round of changes saves both cost and carbon. Once you’ve shown that green infrastructure isn’t inherently expensive, the conversation about paying a premium for Tier 2 and 3 becomes much easier.

Quantify the regulatory risk. Map out the carbon regulations affecting your markets, estimate potential compliance costs, and show how current infrastructure decisions create future exposure. Nothing gets budget approved faster than risk mitigation.

Frame it as architecture modernization. Many carbon-aware changes — newer instance types, better autoscaling, multi-region design — are also modernization improvements. Package them together and the carbon benefit becomes a bonus rather than the primary justification.

What I’m Not Saying

I want to be clear about what this article is not arguing:

I’m not saying every organization should maximize carbon reduction regardless of cost. Budget constraints are real, and some organizations genuinely cannot afford significant premiums on infrastructure.

I’m not saying carbon-aware infrastructure is always more expensive. As I’ve shown, many changes reduce both cost and carbon.

I’m not saying cost optimization is wrong. It’s essential. But it’s incomplete as the sole optimization target.

And I’m not saying that individual engineering teams should bear the full burden of these decisions. Carbon-aware infrastructure requires organizational commitment — budget allocation, metric tracking, and leadership support.

What I am saying is that when carbon-aware choices cost more, that cost is not wasted. It buys regulatory preparedness, reduced carbon debt, better hardware, competitive positioning, and architectural resilience. Whether the premium is worth it depends on your organization’s specific context — but the decision should be made with full information, not defaulted to “cheapest wins.”

The Trajectory Is Clear

Every signal points in the same direction: carbon is becoming a priced externality. Regulations are tightening. Reporting requirements are expanding. Customer expectations are rising. Offset costs are increasing.

Organizations that build carbon-aware infrastructure now are positioning themselves ahead of this curve. They’re paying a modest premium today to avoid a steep premium tomorrow. They’re making engineering decisions that happen to also be good sustainability decisions.

The teams that wait — that continue optimizing solely for cost and plan to “deal with carbon later” — will eventually face the same transition, but under time pressure, regulatory deadlines, and higher costs.

In climate science, we have a concept for this: the cost of delayed action. Every year of delay in reducing emissions doesn’t just maintain the problem — it makes the eventual solution more expensive and more disruptive. The same principle applies to cloud infrastructure. The carbon-aware transition is coming for every organization. The only question is whether you lead it or react to it.

The carbon premium isn’t a tax on doing the right thing. It’s an investment in doing the smart thing — before the market forces everyone’s hand.

This article is part of a series on Green Cloud Systems. Earlier articles covered why green cloud matters, the hidden energy cost of always-on dev/test clusters, practical implementation strategies, cost-aware architecture patterns, and why cost optimization alone doesn’t reduce carbon. Each article builds on the previous — if you’re just joining the series, I’d recommend starting from the beginning.